---

title: Designing Hybrid Operations

url: "https://www.lubauram.com/blog/designing-hybrid-operations/"

description: AI; Operations; Hybridoperations

image: "https://www.lubauram.com/_assets/processed/L1KMkhMMgLkJsKiFYMuzR_goN64FEMtPw79e46g1ut4/q:85/w:1366/h:768/fn:Y3NtX0xVQkFfQmxvZ19CaWxkZXJfXzE5MjB4MTA4MF9weF9fXzdfXzQ2ODQ4YThhNzA:t/cb:916bfbe3b1ade80802e315eef60b61149ff38dac/bG9jYWw6L2ZpbGVhZG1pbi9CaWxkZXIvQmxvZy9MVUJBX0Jsb2dfQmlsZGVyX18xOTIweDEwODBfcHhfX183Xy5wbmc"

date: 2026-04-13

modified: 2026-04-13

lastUpdated: 2026-04-13

categories:

- (Digital) Transformation

- AI

- Highlights

---

# Designing Hybrid Operations

13.04.2026

Designing Hybrid Operations: How Humans and AI Execute Together

=================================================================

For years, the debate around AI in operations has been framed incorrectly. Too often, the question has been whether machines will replace humans. In real operational environments, that framing is not only unhelpful – it is wrong.

The organizations seeing real impact from AI are not replacing people. They are **redesigning workflows** so humans and machines operate together, each doing what they do best. This shift from automation to augmentation is not accidental. It requires deliberate design choices, leadership alignment and cultural change. This article outlines a **repeatable methodology** for building hybrid human–machine operations that scale reliably and responsibly.

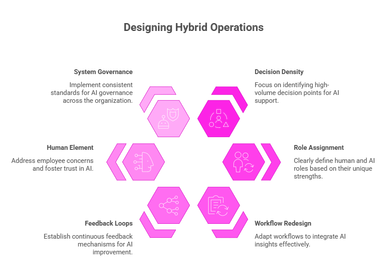

**Step 1: Identify Decision Density, Not Tasks**

------------------------------------------------

The first mistake many organizations make is starting with task automation. They ask, *“Which tasks can AI do faster or cheaper?”* That leads to incremental gains, but rarely to transformation.

A more effective starting point is **decision density**:

Where in the operational flow are humans making frequent, repetitive, or high-volume decisions based on partial information?

Research from [MIT Sloan Management Review](http://mit-bcg-expanding-ai-impact-with-organizational-learning-oct-2020.pdf) shows that AI delivers the most value when it supports complex decision-making rather than simple task execution \[1\]. In operations, these decision points often include triage, prioritization, validation, exception handling or risk assessment.

### **Methodology principle:**

Map workflows by decisions, not activities.

**Step 2: Assign Roles Based on Strengths**

-------------------------------------------

Once decision points are identified, the next step is to **explicitly separate what humans do best from what machines do best**.

Humans excel at:

1. Contextual judgment

2. Ethical reasoning

3. Handling ambiguity and exceptions

4. Accountability for outcomes

AI excels at:

1. Pattern recognition at scale

2. Consistency and speed

3. Processing large volumes of data

4. Detecting anomalies humans might miss

McKinsey’s research on AI in operations shows that the highest-performing organizations design AI to *support* human decisions, not replace them \[2\]. For example, AI can pre-classify cases, surface risks or recommend next actions, while humans retain authority for final decisions, especially in high-impact scenarios.

### **Methodology principle:**

Design AI as a decision partner, not a decision owner.

**Step 3: Redesign the Workflow, Not Just the Tool**

----------------------------------------------------

Introducing AI into an unchanged workflow rarely works. If AI insights are delivered too late, in the wrong format, or without trust, they are ignored.

[Harvard Business Review](https://hbr.org/2018/07/collaborative-intelligence-humans-and-ai-are-joining-forces) highlights that successful human AI collaboration requires **workflow redesign**, not just model deployment \[3\]. This includes:

1. When AI intervenes in the process

2. How recommendations are presented

3. What happens when humans disagree with AI

4. How feedback is captured to improve models

In practice, this often means simplifying steps, removing redundant approvals, and making AI outputs visible *at the moment of decision*, not as a separate report.

### **Methodology principle:**

If the workflow doesn’t change, impact won’t either.

**Step 4: Build Feedback Loops Into Daily Work**

------------------------------------------------

Hybrid operations only improve if humans can **teach the system** over time. This requires structured feedback loops where human overrides, corrections and exceptions are captured and reused.

[The World Economic Forum ](https://www.weforum.org/stories/2025/09/human-centric-ai-shape-the-future-of-work/)emphasizes that responsible and effective AI systems depend on continuous human feedback and monitoring, especially in operational contexts \[4\]. Without this, AI models stagnate or drift away from real-world conditions.

Operationally, this means:

1. Logging when humans override AI recommendations

2. Understanding why overrides happen

3. Feeding that insight back into model refinement

### **Methodology principle:**

Every human correction is a learning opportunity.

**Step 5: Address the Human Side Explicitly**

---------------------------------------------

Technology alone does not create hybrid operations. People do.

Employees must trust AI enough to use it - but not so much that they stop thinking. This balance requires leadership attention, not just training.

[Studies show that resistance to AI ](https://www.gartner.com/en/newsroom/press-releases/2024-06-27-overcoming-employee-fears-of-ai-to-drive-business-value)is rarely about technology; it is about fear of loss of control, unclear accountability and lack of transparency \[5\]. Leaders must clarify:

1. Who is responsible for decisions

2. How AI recommendations should be challenged

3. What skills humans need to develop as AI takes over routine work

This often requires redefining roles, performance metrics and career paths - not just adding tools.

### **Methodology principle:**

Hybrid operations fail when people feel replaced instead of empowered.

**Step 6: Govern the System, Not the Individual Use Case**

----------------------------------------------------------

Finally, hybrid workflows must operate within a **governed system**. Consistency, fairness and accountability cannot be ensured one workflow at a time.

A platform-based approach allows organizations to apply the same standards for explainability, monitoring and escalation across all human–machine interactions. According to [OECD guidance on trustworthy AI](https://www.oecd.org/en/topics/policy-issues/future-of-work.html), systemic governance is essential to ensure AI supports human agency rather than undermines it \[6\].

### **Methodology principle:**

Responsibility scales only when governance is systemic.

.

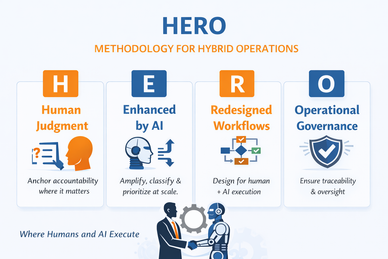

Be the HERO

-------------

Taken together, these steps form what I call the **HERO** methodology for hybrid operations. It starts with anchoring accountability in human judgment, uses AI to enhance decision-making at scale, deliberately redesigns workflows rather than inserting tools into existing processes, and embeds operational governance as a system-level capability.

**H**uman judgment

**E**nhanced by AI

**R**edesigned workflows

**O**perational governance

The HERO methodology is not about automation for efficiency’s sake. It is a design approach that ensures humans and machines execute together in a reliable, responsible and scalable way, turning AI from isolated use cases into real operational impact.

The future of operations is not human or machine. It is human **with** machine.

Organizations that succeed are not the ones with the most advanced algorithms, but the ones that deliberately redesign workflows, roles and leadership practices around hybrid execution.

Human–machine collaboration is not a technology trend. It is an operating model choice - and one that demands clarity, discipline and leadership.

**Sources**

1. [MIT Sloan Management Review – *Expanding AI’s Impact With Organizational Learning*](http://mit-bcg-expanding-ai-impact-with-organizational-learning-oct-2020.pdf)

2. McKinsey & Company – *The State of AI in 2023: Generative AI’s Breakout Year*

3. [Harvard Business Review – *Collaborative Intelligence: Humans and AI Are Joining Forces*](https://hbr.org/2018/07/collaborative-intelligence-humans-and-ai-are-joining-forces)

4. [World Economic Forum – *Human-Centred AI for the Future of Work*](https://www.weforum.org/stories/2025/09/human-centric-ai-shape-the-future-of-work/)

5. [Gartner – *Overcoming Employee Resistance to AI-Driven Change*](https://www.gartner.com/en/newsroom/press-releases/2024-06-27-overcoming-employee-fears-of-ai-to-drive-business-value)

6. [OECD – *Principles on Artificial Intelligence*](https://www.oecd.org/en/topics/policy-issues/future-of-work.html)

#### Categories

- [(Digital) Transformation](https://www.lubauram.com/blog/kategorie/transformation/)

- [AI](https://www.lubauram.com/blog/kategorie/ai/)

- [Highlights](https://www.lubauram.com/blog/kategorie/highlights/)